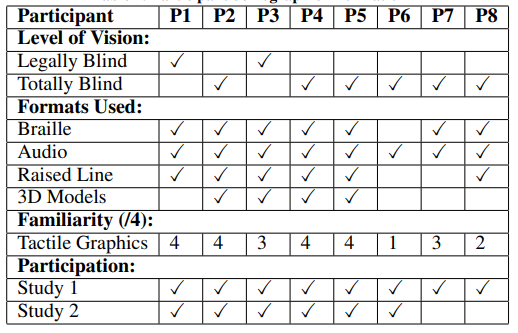

Australian researchers Samuel Reinders, Matthew Butler, and Kim Marriott are exploring ways to improve 3D printed tools for individuals who are blind or have low vision (BLV). Releasing the details of their study in the recently published ‘Hey Model!” – Natural User Interactions and Agency in Accessible Interactive 3D Models,’ the researchers discuss issues that BLV users face, and what changes would help them—as well as exploring new features and preferred modes of interaction.

3D printing has played a role in a variety of different projects and programs for BLV people in the past few years—from allowing them to experience the joy of taking in famous art to creating interactive sculpture, and developing campaigns to help blind children learn. And, while some 3D printing programs have centered around making braille labels, in many cases such efforts are useless as so often blind people do not even know how to read braille fluently. Logically, audio labels would be more helpful, but the researchers realized a true need to speak with BLV people about their actual preferences for interaction.

In the first part of their study, the researchers attempted to understand which types of interaction strategies are ‘most natural,’ using a Wizard of Oz methodology—using a wizard to offer auditory resources for the model. The eight participants were able to interact with the 3D models without any technological limitations. Experiments lasted from one to two hours, with participants using their smartphones and either Siri or Google for completing tasks in reading, answering messages, making calls, searching for public transportation routes, and even requesting to hear jokes.

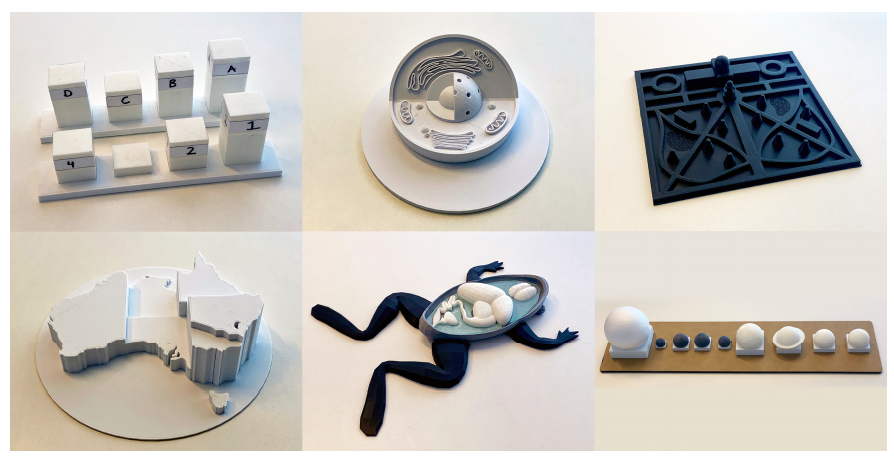

The six models used were chosen to vary in their application domain, the kinds of tasks they might support, and complexity. In all, they consisted of the following, with at least two participants exploring each:

- Two bar charts with removable bars

- Model of an animal cell

- Map of a popular Melbourne public park

- Thematic map of Australia

- Frog dissection model with removable organs

- Solar system model with removable planets

“These were intended to elicit desired interaction behaviors when components were removed or reassembled, as well as to determine whether participants removed components in order to compare them,” stated the researchers.

After interacting with the models, participants of the study were interviewed regarding their thoughts and comfort levels with the 3D-printed models, and they were asked if ‘differing agency capabilities’ would be useful. Interviews were stored on videotape, with audio footage—all of which was transcribed.

3D models used in Study 1 (left-to-right top-to-bottom): a) Two bar charts of average city temperatures with removable bars; b) Animal cell; c) Park map; d) Thematic map of the population of Australia; e) Dissected frog with removable organs; f) Solar system with removable planets

“The use of removable parts where appropriate proved to be a key design choice,” stated the researchers. The parts supported comparison (such as the size of planets), and also made for more compelling experiences, ‘Being able to pick them up? Yeah, I liked it … I like to be able to hold them in terms of the density and size, it is a bit hard to tell when you don’t pick them up.’

“Some design choices caused confusions. Most notable was the lack of an equator on the Earth in the solar system model, and the inclusion of Saturn’s ring, which was confused for the Earth’s equator.”

The second study centered around validating a more functional I3M, integrating interaction techniques and agent functionality from the previous study. In choosing to prototype one model further, the solar system was chosen. Ultimately, as noted by the researchers, the model was ‘enjoyed immensely by all participants,’ and one individual gave more specific input stating that they had enjoyed such access to educational models greatly–and due to a lack of access to such resources at school.

“Indeed there seemed to be a knowledge gap regarding the solar system with most of the participants who used this model in Study 1,” stated the researchers.

The solar system model was chosen as the researchers realized it could be used for:

- Tapping gestures to extract auditory information

- Optional overview

- On/Off functionality

- Braille labelling

- Conversational agent interface

- Model intervention

Participants interfaced with the model, inspired by the Apple interface in saying ‘Hey Model!’ (as in ‘Hey Siri!’) before asking a question. Experiments were one to two hours long, depending on the enthusiasm of the participant.

“When talking to the model, participants treated it as a conversational agent and indicated that they preferred more intelligent models that support natural language and which, when appropriate, could provide guidance to the user,” concluded the researchers. “Participants wished to be as independent as possible and establish their own interpretations. They wanted to initiate interactions with the model and generally preferred lower model agency. However, they did want the model to intervene if they did something wrong such as placing a component in the wrong place.

“Such physically embodied conversational agents raise many interesting research questions, including their perceived agency, autonomy and acceptance by the end user. There are also many questions to be answered on how such agents can be implemented. A major focus of our future research will be to design and construct a fully functional prototype, conduct more extensive user evaluations with a variety of models, including maps, and to explore whether model agency preferences differ with age and environment.”

What do you think of this news? Let us know your thoughts! Join the discussion of this and other 3D printing topics at 3DPrintBoard.com.

[Source / Images: ‘Hey Model!” – Natural User Interactions and Agency in Accessible Interactive 3D Models’]

Subscribe to Our Email Newsletter

Stay up-to-date on all the latest news from the 3D printing industry and receive information and offers from third party vendors.

You May Also Like

3D Printing Financials: Fathom Struggles in Financial Quicksand During Critical Transition

Facing a year of key transitions and financial pressures, Fathom (Nasdaq: FTHM) has filed its annual report for 2023 with the U.S. Securities and Exchange Commission (SEC). The document outlines...

Latest Earnings Overview for Australian 3D Printing Firms Titomic and AML3D

Australian 3D printing manufacturing firms Titomic (ASX: TTT) and AML3D (ASX: AL3) reported their financial results for the period from July to December 2023, marking the first half of their...

3D Printing Webinar and Event Roundup: April 7, 2024

Webinars and events in the 3D printing industry are picking back up this week! Sea-Air-Space is coming to Maryland, and SAE International is sponsoring a 3D Systems webinar about 3D...

3D Printing Financials: Unpacking Farsoon and BLT’s 2023 Performance

In the Chinese 3D printing industry, two companies, Farsoon (SHA: 688433) and Bright Laser Technologies, or BLT (SHA: 688333), have recently unveiled their full-year earnings for 2023. Farsoon reported increases...