DARPA Develops Virtual Eye That Captures a Real Time Virtual Reality View Using Two Cameras

During a disaster situation, first responders benefit from one thing above anything else: accurate information about the environment that they are about to enter. Having foreknowledge of specific building layouts, the locations of impassable obstacles, fires or chemical spills can often be the only thing between life or death for anyone trapped inside. Currently first responders need to rely on their own experience and observations, or possibly a drone sent in ahead of them sending back an unreliable 2D video feed. Unfortunately neither option is optimal, and sadly many victims in a disaster situation will likely perish before they are discovered or the area is deemed safe enough to be entered.

During a disaster situation, first responders benefit from one thing above anything else: accurate information about the environment that they are about to enter. Having foreknowledge of specific building layouts, the locations of impassable obstacles, fires or chemical spills can often be the only thing between life or death for anyone trapped inside. Currently first responders need to rely on their own experience and observations, or possibly a drone sent in ahead of them sending back an unreliable 2D video feed. Unfortunately neither option is optimal, and sadly many victims in a disaster situation will likely perish before they are discovered or the area is deemed safe enough to be entered.

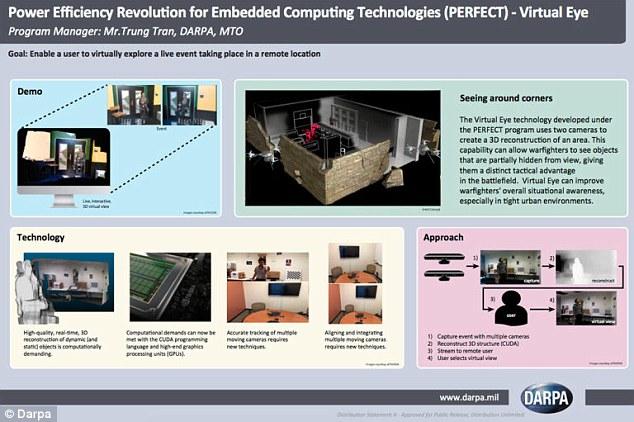

But a team at the Defense Advanced Research Projects Agency (DARPA) has developed technology that can offer first responders the option of exploring a disaster area without putting themselves in any risk. Virtual Eye is a software system that can capture and transmit video feed and convert it into a real time 3D virtual reality experience. It is made possible by combining cutting-edge 3D imaging software, powerful mobile graphics processing units (GPUs) and the video feed from two cameras, any two cameras. This allows first responders — soldiers, firefighters or anyone really — the option of walking through a real environment like a room, bunker or any enclosed area virtually without needing to physically enter.

“The question for us is, can we do more with the information we have? Can we extract more information from the cameras we’re using today? Understanding what we see is critical to making the right decisions in the battlefield. We can create a 3D image by looking at the differences between two images, understanding the differences and fusing them together,” explained Trung Tran, the program manager leading Virtual Eye’s development at DARPA’s Microsystems technology office.

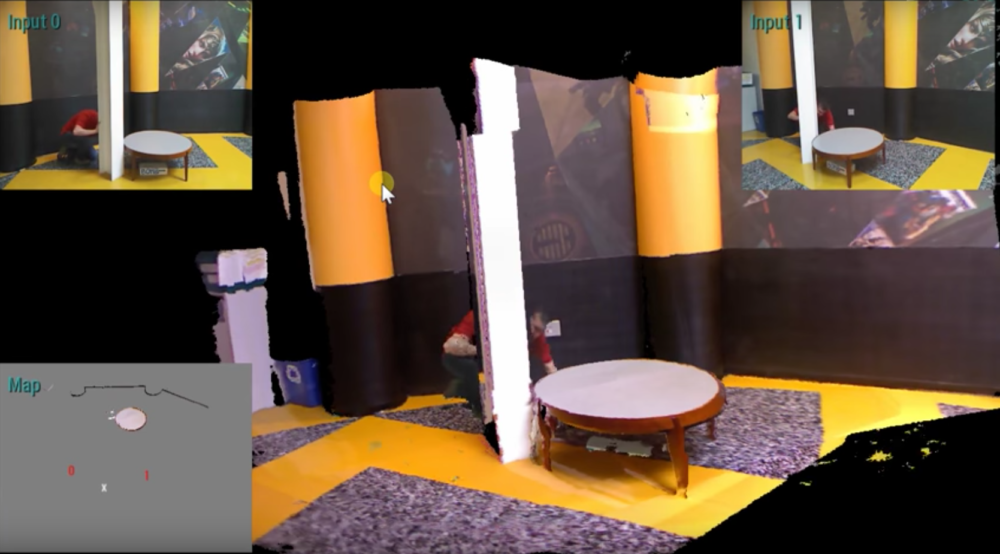

Users of Virtual Eye would be able to take note of the layout, visualize any hazards, identify optimal paths of entry or potentially locate survivors completely risk free. Two drones or robots would be inserted into a questionable environment, each outfitted with a camera. The cameras would be strategically placed at different points in the room with opposing viewpoints. Both video feeds would then be fused together with the Virtual Eye software and converted into a 3D view, and it will extrapolate any missing data using the 3D imaging software so the real time virtual reality feed is complete.

Here is some video of the Virtual Eye system in action:

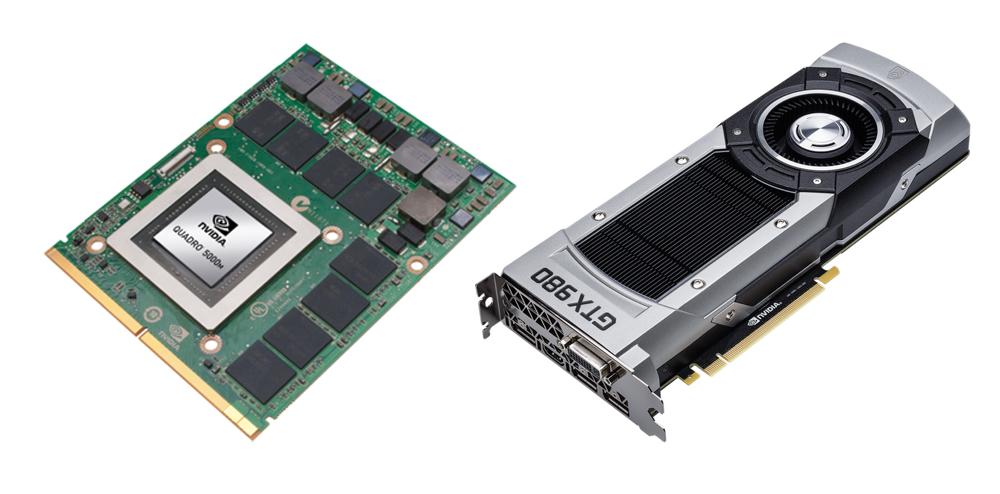

The Virtual Eye system works thanks to NVIDIA mobile Quadro and GeForce GTX GPUs that are small enough to be portable but powerful enough to generate the virtual reality view. The NVIDIA GPUs were specifically chosen because they have the muscle to accurately stitch the two video feeds together and extrapolate the 3D data in real time while also being able to fit inside a laptop. Currently the Virtual Eye system is only capable of combining data from two cameras, however Tran expects that to change soon. The DARPA team is hoping to have a new demo version that is capable of combining up to five different camera feeds by next year.

While the system was specifically created for military, emergency or battlefield applications, as with most technology developed by DARPA it has plenty of potential real world applications as well. The technology could be used to broadcast sporting events or live performances in streaming 3D virtual reality with only a handful of cameras. It would also allow users to visit locations anywhere in the world, from museums to Mount Everest, without needing to leave their homes. Discuss this software over in the DARPA 3D Imaging Visual Eye forum at 3DPB.com.

[Source: DARPA]Subscribe to Our Email Newsletter

Stay up-to-date on all the latest news from the 3D printing industry and receive information and offers from third party vendors.

Print Services

Upload your 3D Models and get them printed quickly and efficiently.

You May Also Like

Mikhail Gladkikh on Digital Inventory: “Think of It as Netflix for Manufacturing”

As manufacturers continue looking for ways to reduce supply chain risk, additive manufacturing (AM) is increasingly being discussed as more than just a production tool. Across aerospace, energy, defense, and...

ROBOZE Buys Dimanex Assets to Build “Physical AI” Platform

Dutch firm Dimanex got its start as an MRO platform for the railways. The company had a contract with the Dutch Army in 2018, and later that year signed one...

MORSAN and LEHVOSS Work on 3D Printing for Food and Beverage

For many years, LEHVOSS has made specialized 3D printing materials such as high-temperature polyamide and high-flow PEEK. Now it has teamed up with MORSAN to develop a 3D printing offering...

3D Printing & Drone Dominance: Speed, Performance, and Derisking the Supply Chain

A shift is underway in drone manufacturing. Government programs like the U.S. Department of War’s Drone Dominance, a $1.1 billion effort to deliver low-cost, one-way attack (OWA) sUAS at scale,...