Within the next few years virtual reality is going to be a huge part of our lives. Not only will it affect the way that we consume media like movies, television and video games, but it will be integrated with our social media experiences, shopping, business meetings and it will even offer us the ability to take virtual tours of homes, museums and landmarks. But one of the primary limitations of virtual reality technology is the fact that it will entirely cut users off from the real world, limiting our ability to interact with our environment while wearing the VR headsets and goggles.

Integrating the virtual world with the real world is the obvious next step, and even before virtual reality gets comfortable new technologies like augmented reality and mixed reality are already being explored. Augmented reality allows users wearing devices, similar to the much maligned Google Glass, to view the real world but also allow virtual objects visible only to those wearing the device to be included in their field of vision. These virtual objects can be placed at specific points in the world using GPS technology that can be viewed in 3D space in real time. Mixed reality, or hybrid reality, is when these virtual elements are merged with physical elements and can both coexist in real space and interact in real time.

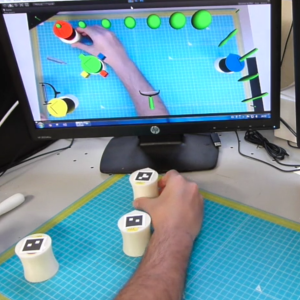

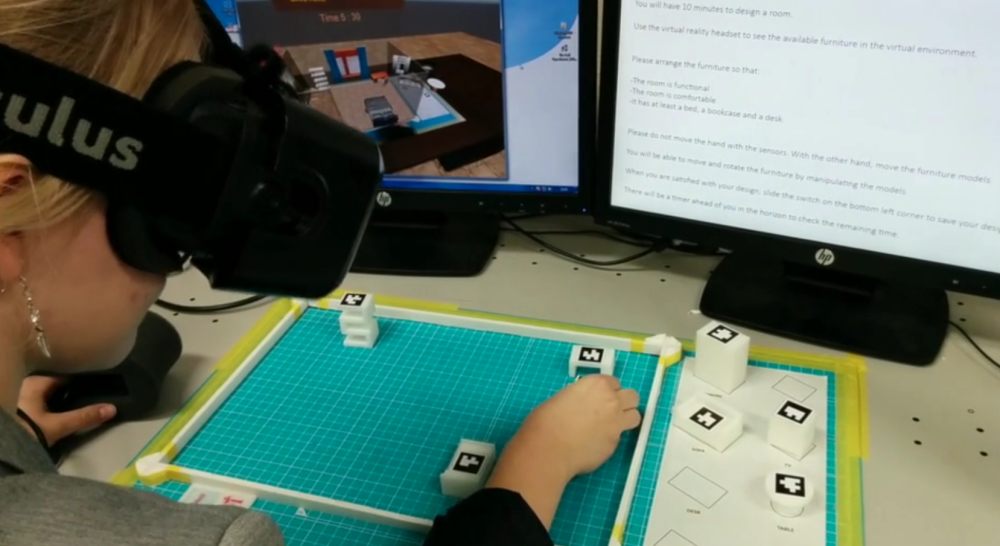

A group of researchers, led by French post-doctorate researcher Pierre-Antoine Arrighi, at the Tokyo Institute of Technology, often called Tokyo Tech, are exploring tools that allow users to manipulate real world objects within a virtual space. Arrighi is also the co-founder of the iMDB of 3D printing, Aniwaa.com, and his extensive 3D printing experience was key in designing the Mixed Reality Tool prototype. Developed in Tokyo Tech’s cm.Design.Lab with professor Céline Mougenot, the tool includes a virtual room with virtual furnishings that are viewable using an Oculus Rift headset. Meanwhile the virtual furnishings have corresponding real world analogs called Tangible User Interfaces (TUIs) that when manipulated by the user will move the virtual object in real time within the virtual environment.

The virtual environment created by the researchers is a home interior simulation design tool that allows the user to design the room layout by rearranging furnishings. If the tool were used in a commercial setting then basically within the virtual world the furnishings can appear as an entire catalog of products available from a furniture seller that the potential customer can cycle through and view in 3D space. The environment can be created by simply inputting the user’s real world room measurements. The small, Zortrax M200-3D printed TUIs are tracked using an augmented reality marker system that will allow the user to move the virtual furnishings throughout the digital environment, trying out new room layouts and testing out combinations of different products.

The virtual world was generated using the Oculus Rift Development Kit 2, which is a vast improvement over the first DK. It includes low persistence OLED displays that help to eliminate motion blur and image rendering judders which are two of the largest contributors to simulator sickness caused by the VR device. The second gen DK also includes more precise, low-latency positional tracking that allows the small TUI movements to be more accurate within the VR space. While this is just an very early prototype using this technology, it is some of the earliest research being conducted on the development of technology that allows both real world and virtual world interaction.

You can see some video of the prototype being tested here:

It is easy to imagine it being used in environments like a gym for a more relaxing workout environment, so wearers can exercise in a location of their choosing without having their view tainted by fellow gym-goers. It also lends itself very well to collaborative design projects where two users, who can be on the opposite sides of the planet, can each use their own TUIs to work together on a design project. And of course the same technology can be paired with immersive touch technology, or even teledonics that will simulate human touch so virtual interactions between two or more people can be made. Discuss this new tool in the 3D Printed Tangible User Interface forum over at 3DPB.com.

Subscribe to Our Email Newsletter

Stay up-to-date on all the latest news from the 3D printing industry and receive information and offers from third party vendors.

Print Services

Upload your 3D Models and get them printed quickly and efficiently.

You May Also Like

3D Printing News Briefs, June 11, 2025: Sustainability, Automotive Tooling, & More

We’re starting with sustainability news in today’s 3D Printing News Briefs, as EOS has strengthened its commitment on climate responsibility, and Zestep is making 3D printing filament out of eyewear...

3D Printing 50 Polymer Stand-In Parts for Tokamaks at the PPPL & Elytt Energy

Of all the world’s things, a tokamak is one of the hardest, most complex, expensive and exacting ones to make. These fusion energy devices make plasma, and use magnets to...

3D Printing News Briefs, May 17, 2025: Color-Changing Materials, Humanoid Robot, & More

We’re covering research innovations in today’s 3D Printing News Briefs! First, Penn Engineering developed 3D printed materials that change color under stress, and UC Berkeley researchers created an open source,...

Firehawk Aerospace Partners with JuggerBot 3D, Gets $1.25M from AFWERX for 3D Printed Propellants

Texas-based Firehawk Aerospace, an advanced energetic materials firm that works with aerospace and defense applications, announced a strategic partnership with JuggerBot 3D, an Ohio-based large-format 3D printer manufacturer. Together, the...