Everything in life and work is better if you’ve got a smooth rhythm going. But according to MIT, soon it’s going to be all about some serious new moves with algorithms–and polarized light–to help us get our groove on more conveniently with 3D printing–and other technology–in the future.

Everything in life and work is better if you’ve got a smooth rhythm going. But according to MIT, soon it’s going to be all about some serious new moves with algorithms–and polarized light–to help us get our groove on more conveniently with 3D printing–and other technology–in the future.

Inspired by previous works and studies regarding inverse rendering and shape recovery techniques, Polarized 3D: Depth Sensing with Polarization Cues has been authored by Achuta Kadambi, a PhD student in the MIT Media Lab; his thesis advisor, Ramesh Raskar, associate professor of media arts and sciences in the MIT Media Lab; Boxin Shi, who was a postdoctoral student in Raskar’s group and is now a research fellow at the Rapid-Rich Object Search Lab; and Vage Taamazyan, a master’s student at the Skolkovo Institute of Science and Technology in Russia, which MIT helped found in 2011.

The team and their research is taking those previous, inspiring ideas to another level: using a framework of algorithms to harness and polarize light, allowing for 3D imaging that will be better on the exponential level. And much, much easier.

With the exploitation of light already used in areas such as polarized sunglasses or 3D movie systems, these scientists now see numerous–and undeniably quite futuristic ways–to use this particular science.

While the MIT researchers state that this could even be used in the developing technology for driverless cars, what is most promising today is that it may lead to a user-friendly process for taking a simple photo of an object and then being able to 3D print it with ease due to a new technology for making high resolution cameras that could be integrated into smartphones.

In their paper, recently presented at the International Conference on Computer Vision 2015 in Santiago, Chile, the authors explained how it is possible to start with a coarse depth map and then enhance it through polarized shape and image information. They use the example of the polarization filter used today in 2D technology regarding photography.

“Today, they can miniaturize 3D cameras to fit on cellphones,” says Kadambi. “But they make compromises to the 3D sensing, leading to very coarse recovery of geometry. That’s a natural application for polarization, because you can still use a low-quality sensor, and adding a polarizing filter gives you something that’s better than many machine-shop laser scanners.”

Their work is unique in that the goal is to recover a shape fully in 3D, an action not fully supported by age-old work like Fresnel equations that link surface normals with material and polarimetric properties.

“This is a new tool for 3D sensing and finds use in virtual reality, autonomous navigation, and industrial inspection. In particular, the proposed technique is suited in areas that require precise 3D depth (e.g. 3D scanning),” states the team.

Polarization is centered around bounce. This is why those polarized sunglasses worked so nicely while you were on that fishing trip in Florida–out in that unpolarized sun. As light is bouncing off of objects you are seeing in a polarized fashion, glare is cut and visual resolution is higher. This ‘bounce’ is very important in the process of polarization, and can go from up and down to side to side, depending on the angle.

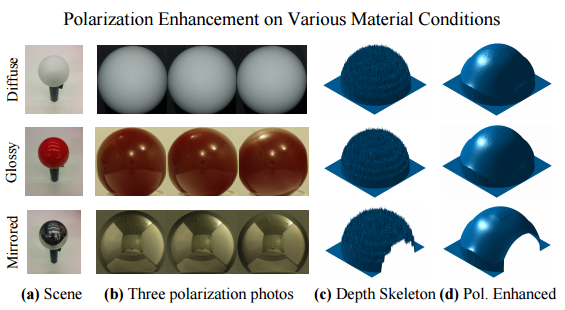

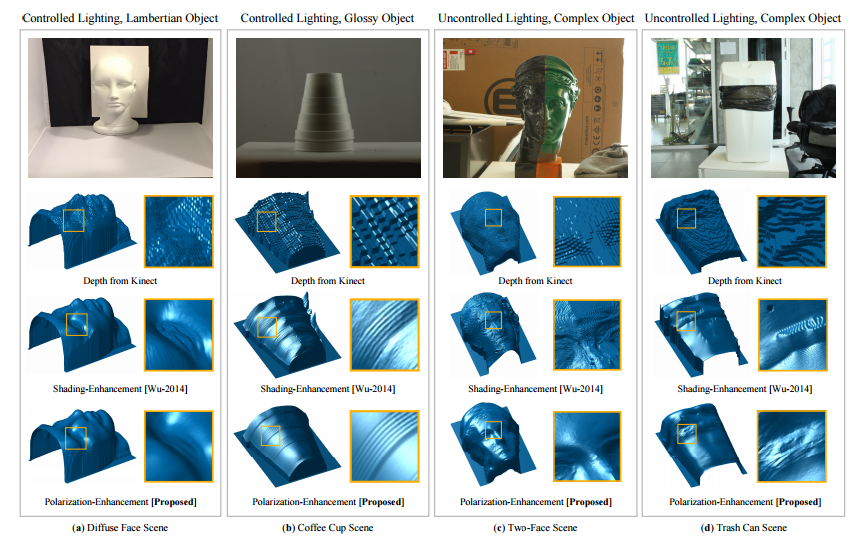

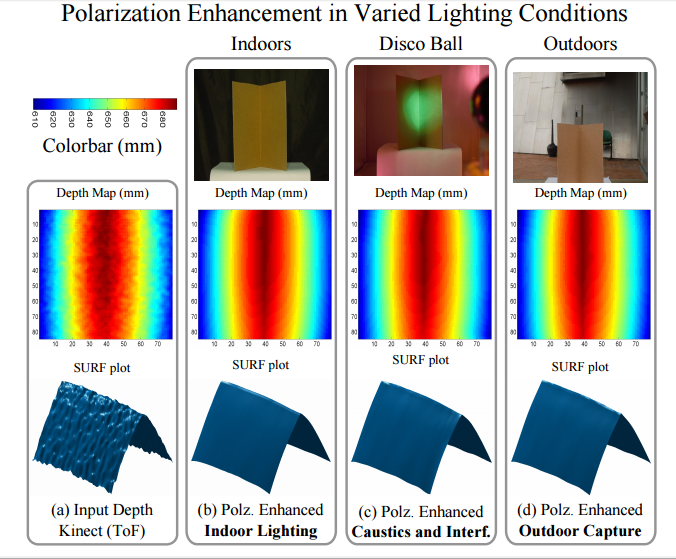

To measure polarization and settle ambiguities regarding this type of light, the researchers used a Microsoft Kinect in attempting to measure depth using reflection time. In a battery of experiments, each time they took three photos of an object, rotating the polarizing filter, and comparing algorithms and the intensity of the light. Their discovery was that while the Kinect can already resolve extremely small details, with polarization information, they could actually resolve features ‘in the range of tens of micrometers, or one-thousandth the size.’

Their technology could also work, feasibly, to improve issues with driverless cars, where vision algorithms stopped working due to weather because the light becomes unpredictably scattered. With these new algorithms, light could be controlled–and scattering eliminated.

“Mitigating scattering in controlled scenes is a small step,” Kadambi says. “But that’s something that I think will be a cool open problem.”

While some of the mechanics used in their original experiment may not be realistic in translation to smartphone camera technology, using a more scalable method would work in terms of grids of tiny polarization filters overlaying individual pixels.

“The work fuses two 3D sensing principles, each having pros and cons,” says Yoav Schechner, an associate professor of electrical engineering at Technion — Israel Institute of Technology in Haifa, Israel. “One principle provides the range for each scene pixel: This is the state of the art of most 3D imaging systems. The second principle does not provide range. On the other hand, it derives the object slope, locally. In other words, per scene pixel, it tells how flat or oblique the object is.”

While the work of the MIT researchers sounds as if it may improve and expand numerous methods, as well as innovative works in progress, it should also offer value in a range of current and more traditional technologies which rely on imaging. Translated to the smartphone, it may truly offer the ability to revolutionize other processes that could be conveniently controlled by apps.

“The work uses each principle to solve problems associated with the other principle,” Schechner explains. “Because this approach practically overcomes ambiguities in polarization-based shape sensing, it can lead to wider adoption of polarization in the toolkit of machine-vision engineers.”

Discuss this story in the Polarized Light 3D Printing forum thread on 3DPB.com.

Polarization enhancement works in a range of lighting conditions (real experiment). (a) ToF Kinect, due to multipath, fails to capture an accurate corner. (b) Polarization enhancement indoors. (c) Polarization enhancement under disco lighting. The disco ball casts directional uneven lighting into the corner and introduces caustic effects. (d) Polarization enhancement outdoors on a partly sunny, winter day. (Source: Polarized 3D: Depth Sensing with Polarization Cues)

Subscribe to Our Email Newsletter

Stay up-to-date on all the latest news from the 3D printing industry and receive information and offers from third party vendors.

You May Also Like

Precision at the Microscale: UK Researchers Advance Medical Devices with BMF’s 3D Printing Tech

University of Nottingham researchers are using Boston Micro Fabrication‘s (BMF) 3D printing technology to develop medical devices that improve compatibility with human tissue. Funded by a UK grant, this project...

3D Printing Webinar and Event Roundup: April 21, 2024

It’s another busy week of webinars and events, starting with Hannover Messe in Germany and continuing with Metalcasting Congress, Chinaplas, TechBlick’s Innovation Festival, and more. Stratasys continues its advanced training...

3D Printing Webinar and Event Roundup: March 17, 2024

It’s another busy week of webinars and events, including SALMED 2024 and AM Forum in Berlin. Stratasys continues its in-person training and is offering two webinars, ASTM is holding a...

3D Printed Micro Antenna is 15% Smaller and 6X Lighter

Horizon Microtechnologies has achieved success in creating a high-frequency D-Band horn antenna through micro 3D printing. However, this achievement did not rely solely on 3D printing; it involved a combination...