For years people have been speculating when or if 3D scanning will come to mobile phones. Apple’s acquisition of several 3D scanning companies and Google’s Project Tango fueled endless discussions. But, as the PrimeSense acquisition was much talked about in 2013, the LinX acquisition fueled a lot of speculation in 2015, the TrueDepth camera was going to change everything in 2017, and ARKit has always been exciting for us since things like object recognition and image reconstruction would also benefit 3D printing. Reality is often a bit more like trickling molasses than dreamt lightning advances, however. Late nights in bars and hushed hallway whispers mentioned huge amounts of sensors being bought and work in file fixing software. Imminent investments and releases turned out to take years to complete.

With billions of devices worldwide, 3D scanning on smartphones could make 3D scanning a technology that many people use day to day. What’s more, a native 3D scanning ability could let us sculpt objects in thin air or customize objects through simple apps. It would in effect bring the power of software to bear on our problem that no one can 3D model anything. For everyone to get involved in 3D printing they would need a Twitter-simple way to input their designs and ideas.

If we look at mass customization, as well, it is being held back currently. It requires effort to go to a store and get 3D scanned. If your phone is a good 3D scanner, then you could scan yourself and get unique gloves 3D printed, or a golf club, or a headrest for your car. Mass customization would be an everywhere-available, idle pass time technology subject to near-instant gratification, if 3D scanning would be available on all phones. Phone-based 3D scanning would be just about the best and biggest thing that could ever happen to our industry out beyond our own efforts to improve ourselves.

The good news is that 3D scanning on phones is here… sort of. This time, it’s finally going to happen…again. Apple’s new iPhones support a number of scanning features that could evolve to be highly impactful for us. Software support for STL and things like object recognition through 3D scanning could be impactful also. On the hardware front, the A14 chip’s Neural Engine will enable machine learning applications. The chip also has 30% more graphics performance and faster image processing that will be very handy for scanning applications.

The TrueDepth camera enables facial recognition. In 2017, this was a structured light scanner using an IR projector. Now it’s time-of-flight. Meanwhile, on the other face of the Apple Pro models, you get three cameras (main, wide, and telephoto), as well as LiDAR. LiDAR is a 3D scanning technology most often used for building-sized or room-sized objects. Apple seems to want to deploy it to really bring AR objects into our living rooms or to enable room-sized scanning applications. It features a time-of-flight scanner that can accurately measure distances and capture scenes in a five-meter range.

For Apple of course being able to move realistically in game environments and do things such as virtually try out products will be significant. At home, just the ability to see how big the TV you found online is or to virtually see how your new standing desk will fit into your house could be very impactful for eCommerce. Virtual trying on clothes could be huge as well, since online buyers return around 40% of clothing bought on the web.

Meanwhile, the kind of detail level that you would need for 3D printing could just not be in the offing. The number of points are probably not going to be numerous enough. It’s unclear if we can extract a point cloud and it’s not yet known how repairing this point cloud would work.

For Apple right now, super accurate measurement and scanning of rooms will open up an entire AR world, as well as a lot of possibilities in apps, such as: try new paint colors, calculate how much wallpaper you need, fit your new kitchen, 3D scan and eBay your objects, calculate your move, sing along with Frank Sinatra, place yourself in a movie scene, 3D emojis etc. With the call of the virtual and apps so strong, the main attraction by Apple would seem to be in the room, not necessarily huge accuracy on softball-sized objects. With the commercial benefits mostly in accuracy in the room, we in 3D printing may find ourselves the ignored Cinderella. as the stepsisters of e-commerce, AR, and gaming get all of the technology directed at them.

Better AR or a lead in AR-based gaming could be the complete future of much of the gaming industry. Apple doesn’t have the luxury of missing that boat. Let’s also not forget that Time of Flight scanners are on phones for a very important reason as well: to improve our selfies. As much as we often think we in 3D printing are the center of the world, better selfies and better pictures are a huge win for Apple and would make them more competitive against Samsung and the like.

So, we, as an ignored stepchild, are left to toil in the shadows? Maybe not. Whereas full 3D printing support does not yet seem in the offing there are ways around this which may be very fruitful. With increased computing power and generative learning, a lot could very well be possible. Software can help clean up and reconstruct files, making them watertight and manifold for us to print. Eventually, someone will be able to extract enough detail from Apple iPhone scanners to get something to print and this will let a lot of of people unleash their creativity. But, we can do something now that may in fact give us a conjoined pathway to a 3D printed Apple phone world.

For that, we’ll have to take a little excursion to the world of military and police snipers. When a sniper is taking a close shot, aiming through the sights may be enough, but longer range shots are more difficult. Bullets fly in an arc, so for a longer shot, the bullet drop has to be estimated. For extremely long shots, not only the wind but also the curvature of the earth has to be compensated. One important variable in trying to calculate how to take a certain shot is by estimating the range that you have to the target. Newfangled range finders can do this for you, but a good old analog method is the mil dot. The mil-dot is a measured pattern on your scope’s sight.

“The distance between the centers of any two adjacent dots on a MIL-Dot reticle scope equals 1 Mil, which is about 36″ (or 1 yard) @ 1000 yards, or 3.6 inches @ 100 yards.”

“[T]he easiest way to range a target is to take the height (or width) of the target in yards or meters multiplied by 1000, then divide by the height (or width) of the target in MILs to determine the range to target.”

And an example:

“A 10” tall prairie dog fits in between the vertical crosshair and the bottom of the first MIL dot which equals 0.875 mils. How many yards is it out?”

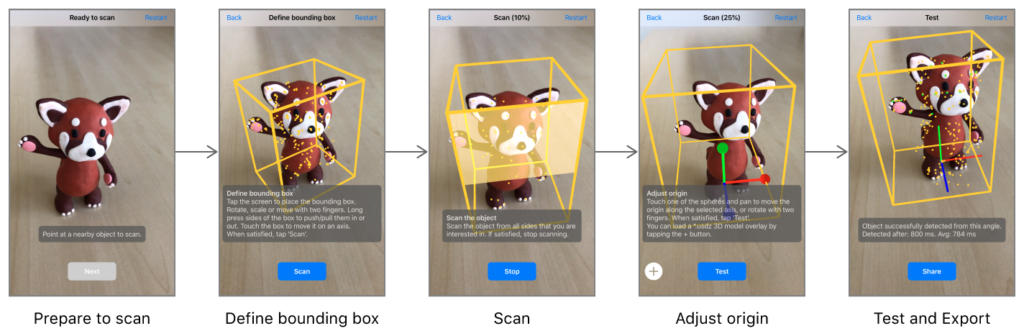

In the sniper example, we often don’t know the range of our target, so we take a known object and, through its size and a reference, can estimate range. Remember that hazy high school math with triangles? If I know some sides I can calculate the others. With our iPhones, we’re going to be very good at knowing how far things are away from us through the many points of the time-of-flight scanners shooting out from our LIDAR sensor. The LIDAR will continually range all of what it sees. With Apple’s ARKit we know we can do object recognition. Through an app, we could, therefore, include many reference objects.

By recognizing Coke cans, specific models of chairs, IKEA furniture, sunglasses or many other objects, we could compare the range estimate and size with the stored known values and increase the accuracy of our range. We could then also estimate our location in the room versus all of the known distances in a number of passes. This information could then be compared with the accelerometer and even with GPS. All in all, we will get a much more accurate picture of where the scanner is, how it is oriented, and where everything in the room is. We will be able to know a lot of things more exact measurements as well.

If we then can 3D scan a number of objects using highly capable industrial 3D scanners the curves and measurements of these reference objects would be even more accurate and be able to more accurately fill in the empty parts of our point clouds and describe the objects better. We could even use photogrammetry or another camera-based scanning method to better fill in and obtain textures of 3D printed objects. This process should get us a lot closer to obtaining 3D printable meshes.

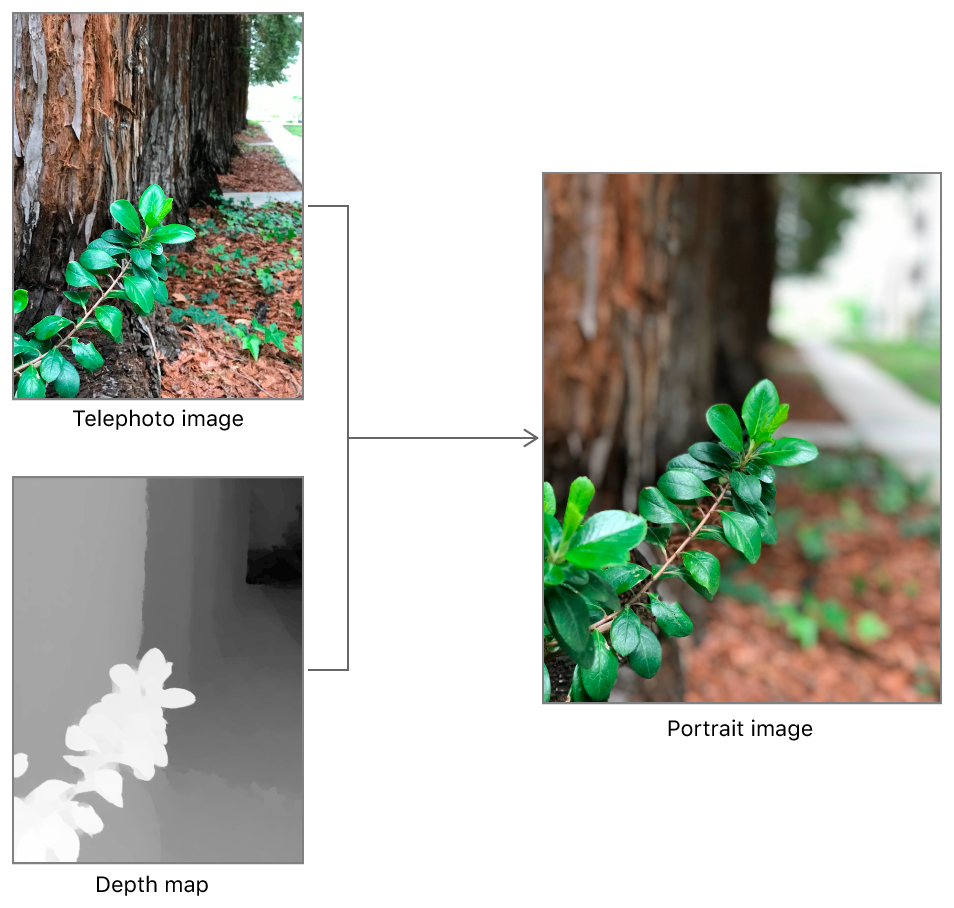

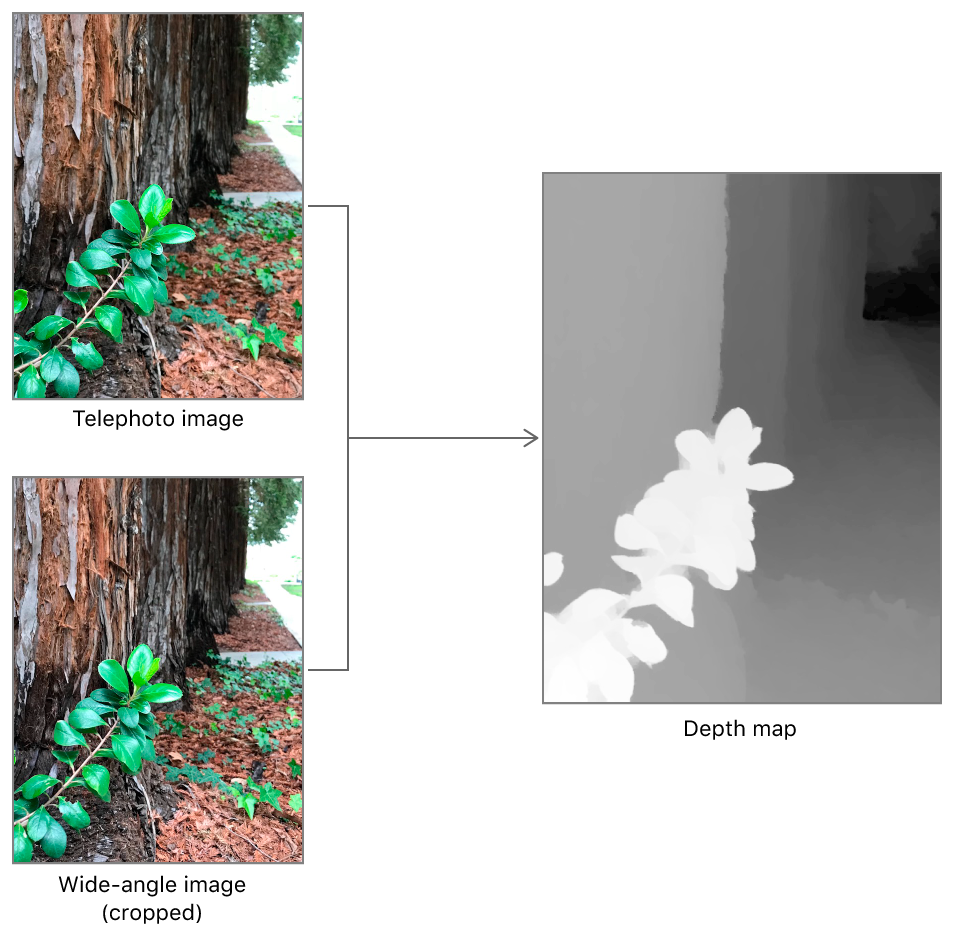

Ideally, of course, the camera data from the other lenses could be compared, as well, and three cameras could take the same image and estimate more clearly its orientation and obtain the color and texture. Indeed, Apple already does this for images:

“[T]he system captures imagery using both cameras. Because the two parallel cameras are a small distance apart on the back of the device, similar features found in both images show a parallax shift: objects that are closer to the camera shift by a greater distance between the two images. The capture system uses this difference, or disparity, to infer the relative distances from the camera to objects in the image, as shown below.

Then it would be a question of using the neural and image processing capability of the phone to resurface this mesh with correct image data.

Parts of this whole process would be fanciful, but it seems like it could work in at least obtaining more accurate measurements and files that could be used to print. If that file then could also have texture and color information coherently applied—it would be an entirely more difficult step, but we suck at color anyway. I’m not 100% sure if this is the answer, but on the whole, I think that, whereas we may not immediately be able to extract a lot of 3D prints from iPhone LiDAR data, we will be able to eventually. In the near term, I would expect that, for some high contrast materials and items, coupled with good reference items, we could use this technology to 3D scan some 3D printable items.

Subscribe to Our Email Newsletter

Stay up-to-date on all the latest news from the 3D printing industry and receive information and offers from third party vendors.

Print Services

Upload your 3D Models and get them printed quickly and efficiently.

You May Also Like

Reinventing Reindustrialization: Why NAVWAR Project Manager Spencer Koroly Invented a Made-in-America 3D Printer

It has become virtually impossible to regularly follow additive manufacturing (AM) industry news and not stumble across the term “defense industrial base” (DIB), a concept encompassing all the many diverse...

Inside The Barnes Global Advisors’ Vision for a Stronger AM Ecosystem

As additive manufacturing (AM) continues to revolutionize the industrial landscape, Pittsburgh-based consultancy The Barnes Global Advisors (TBGA) is helping shape what that future looks like. As the largest independent AM...

Ruggedized: How USMC Innovation Officer Matt Pine Navigates 3D Printing in the Military

Disclaimer: Matt Pine’s views are not the views of the Department of Defense nor the U.S. Marine Corps Throughout this decade thus far, the military’s adoption of additive manufacturing (AM)...

U.S. Congress Calls Out 3D Printing in Proposal for Commercial Reserve Manufacturing Network

Last week, the U.S. House of Representatives’ Appropriations Committee moved the FY 2026 defense bill forward to the House floor. Included in the legislation is a $131 million proposal for...