As artificial intelligence begins to take a real hold in the technology department, we are able to loosen the reins a bit as humans and relax while the innovations we have programmed go forth and create, transform, and improve.

That’s the recent situation for Peter Naftaliev, an AI consultant who blogs at 2d3d.ai and works at Abelians, a small firm of former Israeli intelligence Corps staffers, as he brings us another way to improve on going from 2D into 3D seamlessly, thanks to futuristic technology, along with building somewhat reluctantly on previous work in ‘3D Scene Reconstruction from a Single Image’ (noted somewhat inferior single object reconstruction, but impressive natural scene image) and a recent Computer Vision and Pattern Recognition paper, “Mesh R-CNN.”

Regarding the most recent project in reconstruction, Naftaliev states:

“This is the highest quality 3D reconstruction from 1 image research I have seen yet. An encoding-decoding type of neural network to encode the 3D structure of a shape from a 2D image and then decode this structure and reconstruct the 3D shape.”

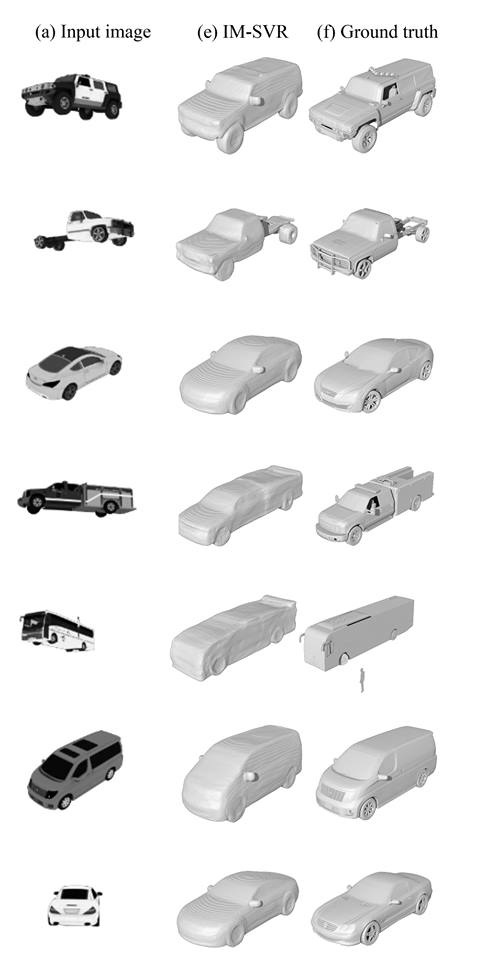

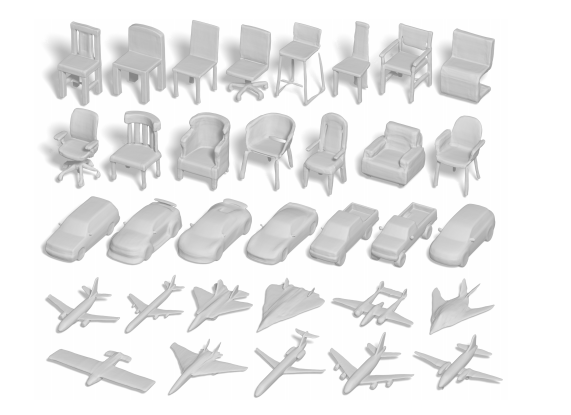

Featuring a transparent background and an input image of 128×128 pixels, the base resolution is 64x64x64 voxels and ‘can produce output in any required resolution (!) without retraining the neural network.’ Naftaliev also points us to the corresponding paper, ‘Learning Implicit Fields for Generative Shape Modeling,’ by Zhiqin Chen and Hao Zhang, as they ‘advocate’ for using generative models and also intend to improve the visual quality of the resulting shapes.

“By replacing conventional decoders by our implicit decoder for representation learning (via IM-AE) and shape generation (via IM-GAN), we demonstrate superior results for tasks such as generative shape modeling, interpolation, and single-view 3D reconstruction, particularly in terms of visual quality. Code and supplementary material are available at this https URL,” state the authors.

“Our implicit decoder does lead to cleaner surface boundaries, allowing both part movement and topology changes during interpolation. However, we do not yet know how to regulate such topological evolutions to ensure a meaningful morph between highly dissimilar shapes, e.g., those from different categories. We reiterate that currently, our network is only trained per shape category; we leave multicategory generalization for future work. At last, while our method is able to generate shapes with greater visual quality than existing alternatives, it does appear to introduce more low-frequency errors (e.g., global thinning/thickening).”

- The first column is input image.

- The second column is the AI 3D reconstruction.

- The last column is the original 3D object of the car – ‘ground truth.’

While the neural network here was trained over cars, Chen and Zhang also use other examples like chairs and airplanes. Naftaliev explains that input-output images and voxel resolutions can be switched ‘for any required implementation.’ And if you are wondering how that last car was reconstructed, welcome to the power of AI. After training over a multitude of examples, the software knows how to present the proper image. If you are looking for tools for 3D reconstruction, try exploring classic photogrammetry techniques and two examples: Agisoft and AutoDesk – Recap.

“This type of software can benefit from the current AI research. Reconstruction of simple planes even if they are not completely seen in the image, handling light reflections or aberrations in the image, better proportion estimations and more. All these can be improved using similar neural network solutions,” says Naftaliev in conclusion.

It seems that we could be on the cusp of being able to easily extract STL files from 2D images and drawings. If repeatable and easy then this would let anyone draw an object that could be turned into a 3D printable file. At the same time, many images can be used to reverse engineer, remix and improve objects that then can be 3D printed. One of the main things holding back 3D printing is that few know CAD and this approach could give many more people the ability to create 3D shapes that could be printed. This could have serious and far-reaching positive impacts on 3D printing.

With a focus on learning, educating, and sharing, Naftaliev strives to understand the open-source community, and what makes them thrive. Check out some of their other projects here. If you are interested in artificial intelligence and the impacts it is making within the open-source and 3D printing community, read about other related stories such as living architectures, human-aware platorms, image recognition, and more.

What do you think of this news? Let us know your thoughts! Join the discussion of this and other 3D printing topics at 3DPrintBoard.com.

3D shapes generated by IM-GAN, our implicit field generative adversarial network, which was trained on 643 or 1283 voxelized shapes. The output shapes are sampled at 5123 resolution and rendered after Marching Cubes (from ‘Learning Implicit Fields for Generative Shape Modeling,’)

Subscribe to Our Email Newsletter

Stay up-to-date on all the latest news from the 3D printing industry and receive information and offers from third party vendors.

Print Services

Upload your 3D Models and get them printed quickly and efficiently.

You May Also Like

Reinventing Reindustrialization: Why NAVWAR Project Manager Spencer Koroly Invented a Made-in-America 3D Printer

It has become virtually impossible to regularly follow additive manufacturing (AM) industry news and not stumble across the term “defense industrial base” (DIB), a concept encompassing all the many diverse...

Inside The Barnes Global Advisors’ Vision for a Stronger AM Ecosystem

As additive manufacturing (AM) continues to revolutionize the industrial landscape, Pittsburgh-based consultancy The Barnes Global Advisors (TBGA) is helping shape what that future looks like. As the largest independent AM...

Ruggedized: How USMC Innovation Officer Matt Pine Navigates 3D Printing in the Military

Disclaimer: Matt Pine’s views are not the views of the Department of Defense nor the U.S. Marine Corps Throughout this decade thus far, the military’s adoption of additive manufacturing (AM)...

U.S. Congress Calls Out 3D Printing in Proposal for Commercial Reserve Manufacturing Network

Last week, the U.S. House of Representatives’ Appropriations Committee moved the FY 2026 defense bill forward to the House floor. Included in the legislation is a $131 million proposal for...