MIT Tangible Media Group Conducts ‘Materiable’ Study Using Haptics with Shape Changing Interfaces

I perk up whenever I see a new project or study emerging from an MIT lab regarding 3D printing. Their researchers take an already fascinating subject but always put a unique spin on the technology with innovations that allow us to see into and think about the future with customized 3D printed robots, fabrication via molten glass and even new ways to harness energy for 3D printing.

I perk up whenever I see a new project or study emerging from an MIT lab regarding 3D printing. Their researchers take an already fascinating subject but always put a unique spin on the technology with innovations that allow us to see into and think about the future with customized 3D printed robots, fabrication via molten glass and even new ways to harness energy for 3D printing.

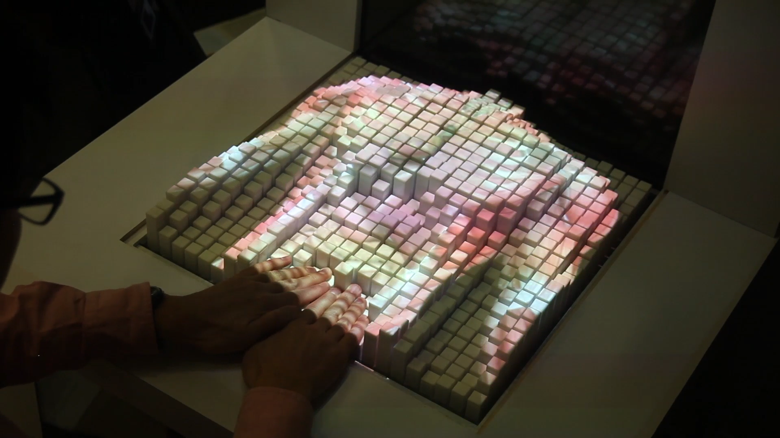

But now they discuss how data and materials are more interesting for all when the sensory angle is increased, allowing for physical manipulation—and a journey into the 3D and 4D realm with materials that are able to shape shift and then revert back to their original form, depending on what the user is doing. Researchers at MIT’s Tangible Media Group have created a project called ‘Materiable,’ which they discuss further in their recent paper, ‘Materiable: Rendering Dynamic Material Properties in Response to Direct Physical Touch with Shape Changing Interfaces,’ authored by Ken Nakagaki, Luke Vink, Jared Counts, Daniel Windham, Daniel Leithinger, Sean Follmer, and Hiroshi Ishii.

Materiable is actually meant to be an interaction technique which allows for data and material properties to be represented through 3D shape changing interfaces in the physical world, rather than just in the digital. This technique centers around haptics in numerous modes, as the researchers worked to build what they refer to as a perceptive model for the properties of deformable materials in response to direct manipulation without precise force feedback.

Materiable is actually meant to be an interaction technique which allows for data and material properties to be represented through 3D shape changing interfaces in the physical world, rather than just in the digital. This technique centers around haptics in numerous modes, as the researchers worked to build what they refer to as a perceptive model for the properties of deformable materials in response to direct manipulation without precise force feedback.

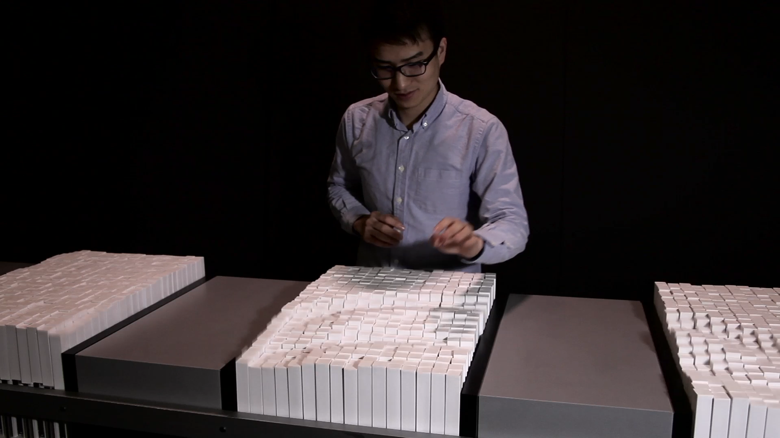

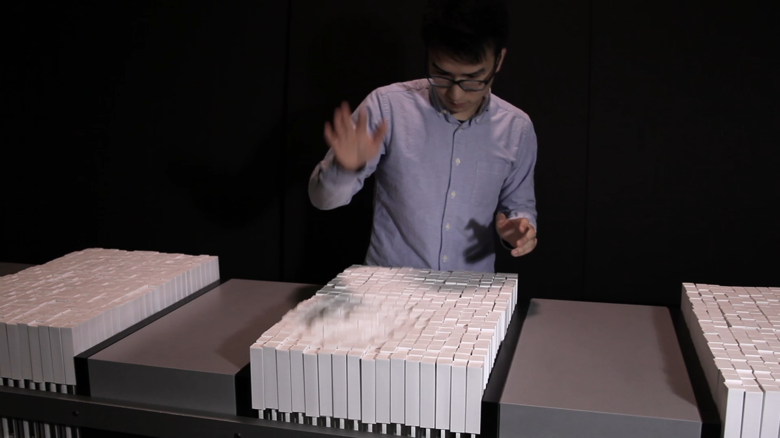

Their goal was to make a 3D proof-of-concept prototype for the study using pin-shaped displays to power algorithms that ultimately form a system with different deformable materials that appeal to both the sense of sight and touch. The researchers experimented with flexibility, elasticity, and viscosity as users touched the displays and caused movement. What they discovered was that when users are able to touch an interface, interaction is much more in-depth and rich in perception. They also discovered that with 3D printing objects can be made and controlled in terms of shape and elasticity by their complex microstructures.

“While shape, color and animation of objects allows us rich physical and dynamic affordances, our physical world can afford material properties that are yet to be explored by such interfaces,” state the researchers in their paper. “Material properties of shape changing interfaces are currently limited to the material that the interface is constructed with. How can we represent various material properties by taking advantage of shape changing interfaces’ capability to allow direct, complex and physical human interactions?”

Ultimately, they work to describe:

- Interaction techniques

- Their implementation

- Future applications

- Evaluation regarding how users differentiate properties in the research system

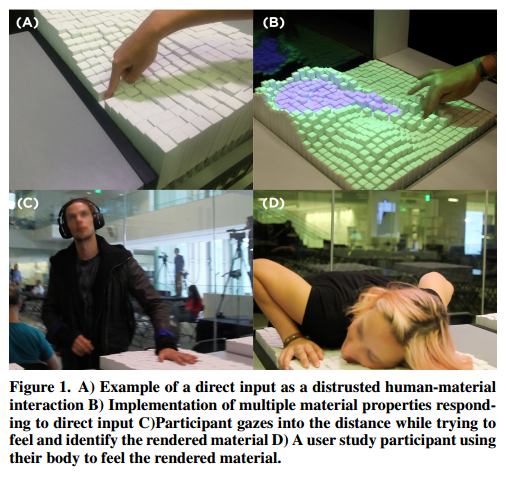

The researchers built in sensors to the interfaces so that they could measure the user’s input. Those participating were allowed to use any part of their body to manipulate material, as well as tools. The researchers initially wanted to see if users could perceive differences in the materials. Ten people were included in the study, spanning ages 22-35, with equal numbers of men and women. The two models included were the ‘Deformable Solid Model’ and ‘Liquid Model.’

They asked them to observe what they saw while interacting with the shapes—instructed to interact however they would like whether that was using one or several fingers, or pressing down with their entire hand or body. The users were then asked how they were able to discern the material properties, as well as being asked to describe what material the interface brought to mind, as well as rating the interfaces in terms of properties using a scale of 1-10 to describe flexibility, elasticity, or viscosity.

They asked them to observe what they saw while interacting with the shapes—instructed to interact however they would like whether that was using one or several fingers, or pressing down with their entire hand or body. The users were then asked how they were able to discern the material properties, as well as being asked to describe what material the interface brought to mind, as well as rating the interfaces in terms of properties using a scale of 1-10 to describe flexibility, elasticity, or viscosity.

“Interestingly, despite being told specifically to ‘observe’ the simulation ‘visually,’ all participants described what they ‘felt’ while describing the simulation before them,” stated the researchers. “While we did not test specifically for tactile perception, at the conclusion of the study eight out of the ten users stated they were prioritizing their perception of touch over sight when asked to describe, identify and rate the rendered material simulations.”

Results were substantial in that the researchers learned they could influence material properties by changing algorithmic parameters regarding flexibility, elasticity and viscosity. Those participating in the study spoke out regarding issues such as having a hard time rating flexibility after interacting by pushing downward—something not intuitive for them.

“Despite this concern with interaction, participants were still able to correctly identify flexibility as a property of the simulation,” stated the researchers.

Participants were most in tune with viscosity, responding much faster and more accurately.

“We feel this correlates directly to the very active nature of the simulation which depending on the way the participant interacted with it and the level of dampening would have the simulation remain active for a longer period of time than the deformable solid implementation,” stated the researchers.

They agree that a more comprehensive study, with more participants, would help to study that correlation further, along with examining other correlations about how the material properties are perceived along with the algorithmic variables.

“We would like to be able to sense the input and vary the output of force as to better match the forces applied to and given back by real world materials,” stated the researchers who assessed a need for better sensing and actuation to vary properties better for the tactile perception experiments.

The results showed overall that tactile perception overwhelmingly outweighs that of visual. The researchers believe that is because those participating were actually touching and causing an object to move, allowing them to connect physically. They see a more advanced study with more complex material simulation as being beneficial in the future, giving them the chance also to improve some of the more technical requirements involved along with shape display actuators and surface textures. The researchers also look toward more advanced evaluations based on comparison with real materials.

“We believe it is necessary to have advanced user studies to ask users to compare with actual material,” concluded the researchers. “Through such comparative user studies of our system with real material, we could indicate how hardware and software should be improved.”

This unique study, while there may be more to come, has allowed them to see a future ahead for similar interfaces with changing shapes that allow materials, perhaps 3D printed, to be seen by their ‘perceived material properties,’ and to be manipulated and then actually put to use in real life applications, offering enhanced experience in the digital realm. It has caught the attention of the internet as well, as sites like The Verge note that the 3D printed ‘pixels’ used in this project respond to touch and light, and hold incredible interest for the future. How do you think this study can make an impact on real world applications? Tell us your thoughts in the 3D Printed Materials & Haptics MIT study forum over at 3DPB.com.

Subscribe to Our Email Newsletter

Stay up-to-date on all the latest news from the 3D printing industry and receive information and offers from third party vendors.

Print Services

Upload your 3D Models and get them printed quickly and efficiently.

You May Also Like

3D Printing News Briefs, April 18, 2026: Educational Grants, Bambu X1, & More

In today’s 3D Printing News Briefs, SPE announced a collaboration to expand 3D printing education through its equipment grant program. Bambu Lab has retired its X1 Series of FFF 3D...

Former Spy/Congressman to Head New Federal Division at Construction 3D Printing Powerhouse ICON

Earlier this year, the Department of Defense (DOD) broke ground on its biggest additive construction (AC) project to date, aiming to complete ten new barracks at Texas’s Fort Bliss US...

U.S. Army Begins Construction of 10 3D Printed Barracks at Fort Bliss

The U.S. Army has begun construction of 10 3D printed barracks at Fort Bliss in El Paso, Texas, in what is being described as the Department of Defense’s largest 3D...

PALFINGER and ICON Partner to Push 3D Printed Construction Into Heavy Industry

Construction 3D printing pioneer ICON is teaming up with Austrian crane specialist PALFINGER in a move that could help bring large-scale 3D printing closer to mainstream construction use. The two...