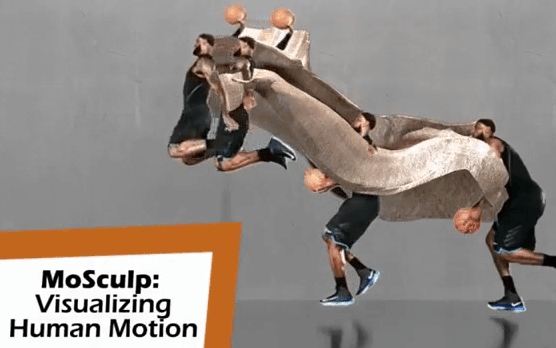

The technology is detailed in a paper entitled “MoSculp: Interactive Visualization of Space and Time.” The researchers believe that it could be a new way for athletes to study the motion of the human body.

The technology is detailed in a paper entitled “MoSculp: Interactive Visualization of Space and Time.” The researchers believe that it could be a new way for athletes to study the motion of the human body.

“Imagine that you have a video of Roger Federer serving a ball in a tennis match, and a video of yourself learning tennis,” said PhD student Xiuming Zhang, lead author of the paper. “You could then build motion sculptures of both scenarios to compare them and more comprehensively study where you need to improve.”

Users can use a computer interface to navigate around the motion sculptures and see them from every angle. The sculptures can also be 3D printed.

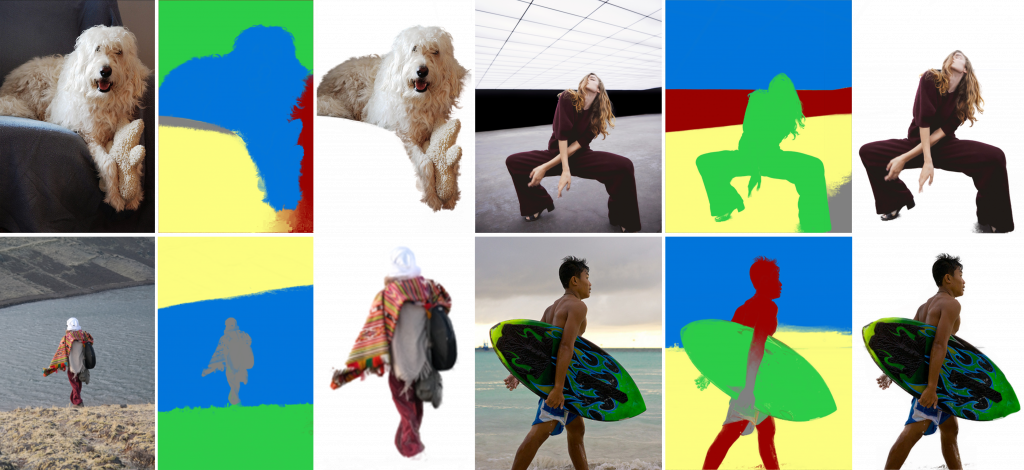

Different techniques have been used in the past to try to get a full visual understanding of the body in motion. Stroboscopic photography techniques, in which hundreds of photographs are snapped in rapid sequence and then stitched together like a flip book, have commonly been used. These are still only snapshots, though, that provide limited understanding. CSAIL’s technique takes a video and automatically detects key 2D points on the subject’s body, then takes the best possible poses from those points to be turned into 3D “skeletons.” Those skeletons are then stitched together and a motion sculpture is generated.

Users can do a lot with the sculptures, customizing them to focus on different body parts, assigning different 3D printed materials to distinguish among parts, or customizing lighting. In a user study, the researchers learned that more than 75 percent of subjects felt that MoSculp provided a more detailed visualization for studying movement than standard photographic techniques.

“Dance and highly-skilled athletic motions often seem like ‘moving sculptures’ but they only create fleeting and ephemeral shapes,” said Courtney Brigham, communications lead at Adobe. “This work shows how to take motions and turn them into real sculptures with objective visualizations of movement, providing a way for athletes to analyze their movements for training, requiring no more equipment than a mobile camera and some computing time.”

The system works best with larger movements, such as throwing a ball or leaping. It also works in situations that might obstruct or complicate movement, like someone wearing loose clothing or carrying an object. At this point, the system only uses single people to construct motion sculptures, but the researchers hope to expand it to multiple people shortly. They believe that it could be used to study things like social disorders, interpersonal interactions and team dynamics.

The paper will be presented at the User Interface Software and Technology (UIST) conference, which will be taking place in Berlin from October 14th to 17th. Authors of the paper include Xiuming Zhang, Tali Dekel, Tianfan Xue, Andrew Owens, Qiurui He, Jiajun Wu, Stefanie Mueller, and William T. Freeman.

Discuss this and other 3D printing topics at 3DPrintBoard.com or share your thoughts below.

Subscribe to Our Email Newsletter

Stay up-to-date on all the latest news from the 3D printing industry and receive information and offers from third party vendors.

You May Also Like

3D Printing Unpeeled: New Arkema Material for HP, Saddle and Macro MEMS

A new Arkema material for MJF is said to reduce costs per part by up to 25% and have an 85% reusability ratio. HP 3D HR PA 12 S has been...

3D Printing News Briefs, January 20, 2024: FDM, LPBF, Underwater 3D Printer, Racing, & More

We’re starting off with a process certification in today’s 3D Printing News Briefs, and then moving on to research about solute trapping, laser powder bed fusion, and then moving on...

3D Printing Webinar and Event Roundup: December 3, 2023

We’ve got plenty of events and webinars coming up for you this week! Quickparts is having a Manufacturing Roadshow, America Makes is holding a Member Town Hall, Stratafest makes two...

Formnext 2023 Day Three: Slam Dunk

I’m high—high on trade show. I’ve met numerous new faces and reconnected with old friends, creating an absolutely wonderful atmosphere. The excitement is palpable over several emerging developments. The high...