Depth-Sensing Cameras Will Soon Turn Every Smartphone into a High-Quality 3D Scanner

It looks like the depth-sensing time-of-flight camera technology that was developed and popularized for projects like the Xbox Kinect and the ZCam are already showing up in the next generation of smartphones. With Google announcing at CES 2016 that their Project Tango will bring computer vision, depth sensing, and motion tracking technology to Lenovo consumer mobile devices this year, full 3D video is just one of dozens of apps that will soon be available. With depth-sensing technology, smartphones will instantly become hyper-aware of their immediate surroundings and can be used for everything from virtual reality applications to a version of Google maps with walking directions inside of buildings to enabling accurate sizing suggestions when purchasing clothing and shoes online.

It looks like the depth-sensing time-of-flight camera technology that was developed and popularized for projects like the Xbox Kinect and the ZCam are already showing up in the next generation of smartphones. With Google announcing at CES 2016 that their Project Tango will bring computer vision, depth sensing, and motion tracking technology to Lenovo consumer mobile devices this year, full 3D video is just one of dozens of apps that will soon be available. With depth-sensing technology, smartphones will instantly become hyper-aware of their immediate surroundings and can be used for everything from virtual reality applications to a version of Google maps with walking directions inside of buildings to enabling accurate sizing suggestions when purchasing clothing and shoes online.

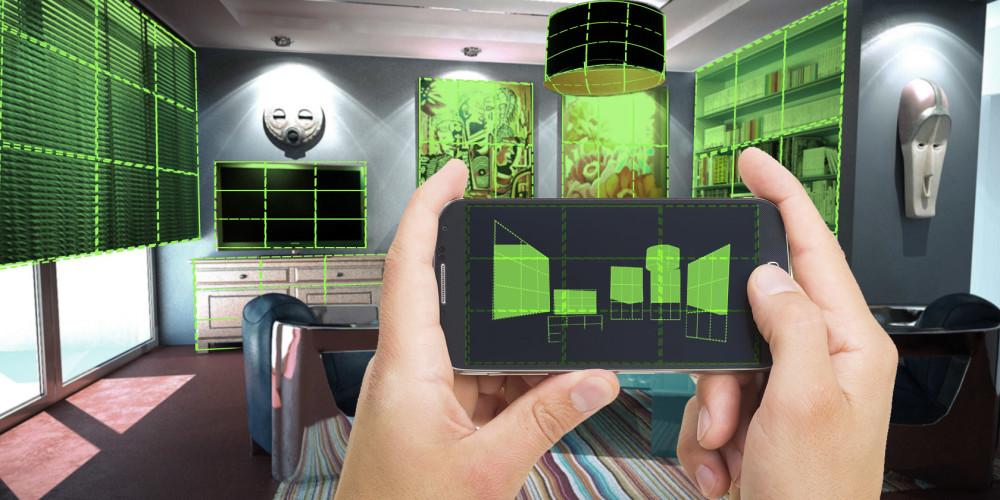

Range imaging time-of-flight cameras are highly advanced LiDar systems that replace the standard point-by-point laser beams with a single light pulse to achieve full spatial awareness. The camera can sense the time that it takes light to return from any surrounding objects, combine it with video data and create real time 3D images that can be used to track facial or hand movements, completely map out a room or even remove or overlay 3D objects or backgrounds from an image. The technology can be adapted as a high-speed 3D laser scanner, which can capture more than 150 images per second. Realistically, the technology could turn the everyday smartphone into an incredibly advanced, handheld 3D scanner.

While 3D video and high-speed laser scanners are not new technology, they have never really been adopted by mainstream users due to cost. Typically, full-use 3D cameras and laser scanners can cost $10,000 and increase in price to over $200,000 for higher-end models. But the cost of the technology is rapidly dropping thanks to impending consumer-grade products like those developed by Google’s Project Tango not to mention Intel RealSense and the upcoming Apple PrimeSense depth-sensing cameras. With this new technology hitting smartphones, a world of new, life-changing apps and functionality will be available to virtually everyone.

One of the hurdles that needs to be cleared before the full range of depth-sensing camera features can be adopted by mainstream users is the ability to process and compress the large amounts of data required to generate a fully-realized 3D image. But with advances in smartphone processors and data compression, app developers are already hard at work developing for the new platform. While this may require hardware manufacturers to heavily invest in software development to manage the new apps, many of the industry’s biggest names are already on board.

Depth sensors’ ability to capture large amounts of 3D information will completely change the way that digital photography is done. Users would be able to seamlessly remove objects, isolate single objects, and even remove and replace the entire background of a picture. Navigation software like Google Maps would be more accurate than ever by combining GPS data with the ability to accurately sense the user’s immediate surroundings; they could offer the ability to navigate inside of buildings. Devices could even be developed that allow the visually impaired to navigate the world around them, and the hearing impaired could have sign language translated in real time.

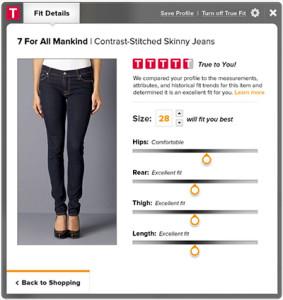

Another major application could completely alter the way that we buy and shop for clothing by offering highly accurate sizing without having to step into a store or physically try anything on. Depth sensors on smartphones would turn a 3D selfie into incredibly accurate body measurements that would work with retailers to allow their clothing or shoes to be virtually tried on to verify fit. There are already apps available using this technology, but with a 3D camera in every customer’s home, it could cause the online clothing market to explode. It would even allow high-end retailers the ability to offer custom tailoring or even the fabrication of customized, bespoke clothing.

Naturally, virtual reality and 3D printing would suddenly be accessible to just about everyone. VR enabled smartphones paired with VR headsets would be able to generate complete 3D environments and allow users to interact with them in real time. Games and apps could also be developed to automatically create levels and interactive environments based on a user’s surroundings, turning a living room into a game level or a virtual workspace. And the ability to capture incredibly detailed 3D images means that almost any real world object can be 3D scanned, converted into a 3D printable file and sent directly to online 3D printing services providers. Not only would that turn rapid prototyping into instant prototyping, but it would allow artists and designers to create something and instantly offer 3D printed copies of their work to the rest of the world.

Of course all of those abilities are probably a while out in terms of product availability, but the smartphone cycle moves very quickly. Because consumers usually replace phone models every eighteen months, hardware manufacturers and software and app developers are always pushing to release new devices with the best new toys. With the first handful of depth-sensor enabled devices due out later this year, it isn’t hard to expect that 3D cameras will be standard on smartphones within the next few years. Are these features you are interested in for your smartphone? Discuss in the Smartphones Go 3D forum over at 3DPB.com.

Here is a video explaining how Google’s Project Tango works and what it can be used for:

Subscribe to Our Email Newsletter

Stay up-to-date on all the latest news from the 3D printing industry and receive information and offers from third party vendors.

Print Services

Upload your 3D Models and get them printed quickly and efficiently.

You May Also Like

Flashforge Bets on Meshy AI as Desktop 3D Printing Battle Intensifies

Competition in desktop 3D printing is brutal. Whereas before, firms competed through value engineering, Prusa clones now have an integrated hardware, sensor, and software setup that is making all the...

Ford Uses Binder Jet 3D Printing to Make Boat Propellers for Sharrow Marine

Ford’s Advanced Industrial Technology and Platforms (ATP) group has helped Sharrow Marine make a boat propeller in two weeks rather than 130 days. Thanks to the Michigan Central program, Ford...

Skuld to Work on DARPA’s Rubble to Rockets (R2R) Program

Skuld will work on the Defense Advanced Research Projects Agency’s (DARPA) Rubble to Rockets (R2R) Program, which turns scrap metal into missile components. Skuld will help with alloy design, characterization, and...

From “Magic” to Metal: How Intrepid Automation Wants to Make 3D Printing Matter at Scale

Ben Wynne still talks about 3D printing the way people do when they’ve felt that “wow” moment up close. Back in the early 2000s, he was working at HP’s advanced...